The state of a single thread is similar to that of a process. It has a program counter (PC) that tracks where the program is fetching instructions from. Each thread has its own private set of registers it uses for computation; thus, if there are two threads that are running on a single processor, when switching from running one (T1) to running the other (T2), a context switch must take place. The context switch between threads is quite similar to the context switch between processes, as the register of T1 must be saved and the register state of T2 restored before running T2. With processes, we saved state to a process control block (PCB); now, we'll need one or more thread control blocks (TCBs) to store the state of each thread of a process. There is one major difference, though, in the context switch we perform between threads as compared to processes: the address space remains the same (i.e., there is no need to switch which page table we are using).

One other major difference between threads and processes concerns the stack. in a multi-threaded process, each thread runs independently and of course may call into various routines to do whatever work it is doing. Thus, instead of a single stack within the address space, there will be one for each thread. Let's say we have a multi-threaded process that has four threads in it. In such a case, our address space might look like this:

|---------------------|

| program code |

| |

| |

|---------------------|

| heap |

| |

| |

|---------------------|

| | |

| v |

| |

| free |

| |

| ^ |

| | |

|---------------------|

| stack 1 |

| |

|---------------------|

| free |

| |

| ^ |

| | |

|---------------------|

| stack 2 |

| |

|---------------------|

| free |

| |

| ^ |

| | |

|---------------------|

| stack 3 |

| |

|---------------------|

| free |

| |

| ^ |

| | |

|---------------------|

| stack 0 |

| |

|---------------------|

Shared data problem:

#include <stdio.h>the code sequence for doing so might look something like:

#include <pthread.h>

#include "mythreads.h"

static volatile int balance = 0;

void *

mythread(void *arg)

{

char *letter = arg;

int i;

printf("%s: begin\n", letter);

for (i = 0; i < 1e7; i++) {

balance = balance + 1;

}

printf("%s: done\n", letter);

return NULL;

}

int

main(int argc, char *argv[])

{

pthread_t p1, p2;

printf("main: begin [balance = %d]\n", balance);

Pthread_create(&p1, NULL, mythread, "A");

Pthread_create(&p2, NULL, mythread, "B");

// join waits for the threads to finish

Pthread_join(p1, NULL);

Pthread_join(p2, NULL);

printf("main: done with both [balance = %d]\n", balance);

return 0;

}

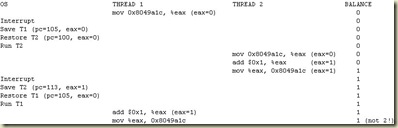

mov 0x8049a1c, %eaxImagine one of our two threads (thread 1) enters to increment balance by one. It loads the value of balance(let's say it is zero to begin with) into its register eax. Thus, eax=0 for thread 1. Now, something unfortunate happens: a timer interrupt goes off. This causes the OS to save the state of the currently running thread (its PC, its registers including eax, etc.) to the TCB for this thread.

add $0x1, %eax

mov %eax, 0x8049a1c

Now something worse happens: thread 2 is chosen to run, and it enters this same piece of code. It also executes the first instruction, getting the value of balance and putting it into its eax (remember: each thread when running has its own private registers; the registers are virtualized by the context-switch code that saves and restores them). The value of balance is still zero at this point, and thus thread 2 has eax=0. Let's then assume that thread 2 executes the next two instructions, incrementing eax by 1 (thus eax=1), and then saving the contents of eax into balance (address 0x8049a1c). Thus, the global variable balance now has the value 1.

Finally, another context switch occurs, and thread 1 resumes running. Recall that it had just executed the first mov instruction, and is now about to add 1 to eax. Recall also that eax=0. Thus, the add instruction increments eax by 1 (thus eax=1), and then the mov instruction saves it to memory (thus balance is set to 1 again).

We could get different result every time. This is called a race condition: the results depend on the timing execution of the code. we call this code a critical section. A critical section is a piece of code that accesses a shared variable (or more generally, a shared resource) and must not be concurrently executed by more than one thread. What we really want for this code is what we call mutual exclusion. This property guarantees that if one thread is executing within the critical section, the others will be prevented from doing so. All above from following link

No comments:

Post a Comment