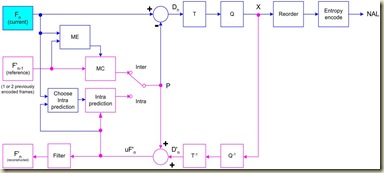

Forward path in blue lines: each block is encoded in intra or inter mode. The prediction block P is based on a reconstructed frame. From the last post “Prediction in I-frame”, P is formed from data in current I-frame that have previously encoded, decoded and reconstructed. From the past post “Prediction in P-frame”, P is formed from motion compensated prediction from one or more reference frames. Dn is the residual (difference block). T is block transformation. Q is quantization. X is a set of quantized transform coefficients. NAL is Network Abstraction Layer for transmission or storage. (UF’n is unfiltered data which are used form intra)

Reconstruction path in magenta lines: quantized block coefficient X are re-scaled and inverse transformed to produce a difference block D’n. The quantization process is losses process so that D’n is different from Dn. The prediction block P is added to D’n to get a reconstructed block uF’n. A loop filter is used to reduce distortion to get block F’n.

The purpose of the reconstruction path is to ensure that both encode and decode use identical reference frames to create the prediction P.

[update: 9/16/2010]In above figure, the output of ME is motion vector (deltaX, deltaY). For example, for one 16x16 macro-block in Fn, we do block search and block matching in ME and get the motion vector. In frame F'n-1, we get the predicted macro-block using deltaX,deltaY. This is the process of MC.

Note that the constructed frame used for intra-prediction is before 'loop filter'. Since the pixel values will change after filtering around the intra-prediction blocks. [#]

The encode process has included decode. The decode process is in following figure.

Two former posts are listed here and here.

please send the source code of H.264 Encoder and Decoder in MATLAB

ReplyDelete